Beginners 101 Guide: Anduril's Lattice — America's New Robot Warfare Brain — What It Is, How It Works, and Why It Matters

Summary

Imagine you are a soldier standing in a desert with a hundred different alarms going off at once.

Drones are flying overhead. Some are enemy drones, some are friendly.

Radar systems are beeping. Cameras are showing movement.

Your radio is filled with voices. How do you know which threat to deal with first — and how do you deal with it faster than the enemy?

This is exactly the problem that Anduril's Lattice platform is designed to solve.

Lattice is a software system built by Anduril Industries, a defense technology company founded in California in 2017.

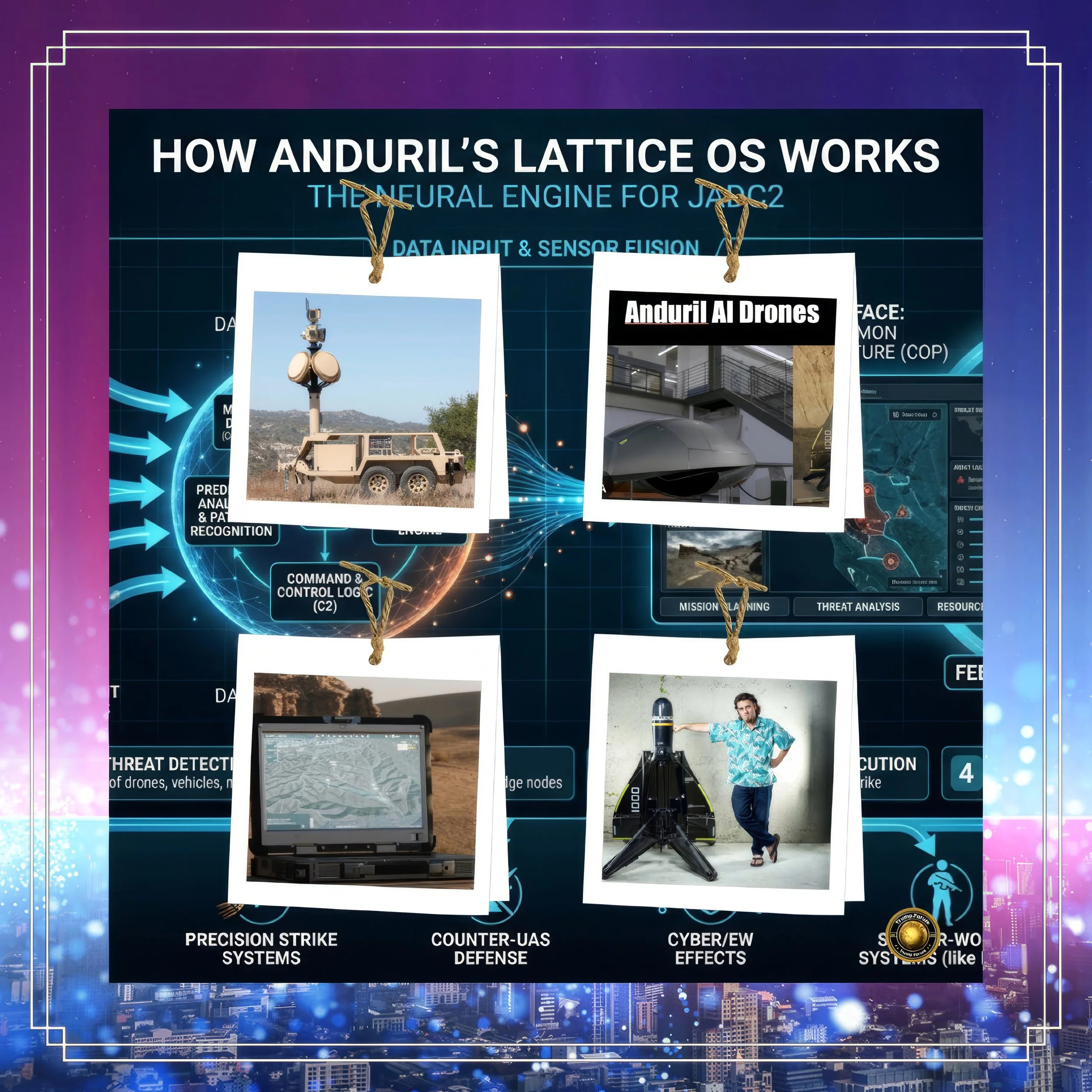

Think of Lattice as the brain of a very large, very fast robot army.

It connects sensors — cameras, radars, microphones, satellites — from hundreds of different locations and translates all that information into a clear picture that a soldier or commander can understand and act on.

The soldier does not need to look at 100 different screens.

Lattice shows them one screen with the most important information, already organized and ranked by importance.

That is the basic idea.

What makes Lattice special compared to older systems is its speed and flexibility.

Old military software was built like a very rigid machine: it could only talk to specific equipment from specific manufacturers, and connecting new technology to it took months or years.

Lattice is built with an "open architecture," meaning it can connect to almost any sensor or weapon system from almost any manufacturer.

It is like a universal charger that works with any phone, instead of a charger that only works with one brand.

In March 2026, the United States Army signed a contract with Anduril worth up to $20 billion over 10 years to make Lattice the primary technology backbone for its military operations, especially for shooting down enemy drones.

This is a huge deal. It means the U.S. military is betting its future on Anduril's technology in a very big way.

The contract replaced more than 120 separate smaller contracts with one single agreement — imagine combining 120 different phone plans into 1 simple plan that covers everything.

The key technology inside Lattice is a concept called a data mesh.

A data mesh means that information does not have to travel from every sensor to a central computer and back again — instead, sensors can talk directly to each other, sharing information instantly across the battlefield.

This is important because in war, the central computer might be destroyed or blocked by enemy jamming. With a mesh network, if one node is destroyed, the others keep working. It is like a spider web: cutting one thread does not destroy the whole web.

Lattice also connects to another important Pentagon system called Maven Smart System (MSS), run by a company called Palantir.

Think of Palantir's system as the intelligence analyst sitting in a headquarters office, reading reports and figuring out which buildings might be enemy targets.

Think of Lattice as the team on the ground that carries out the mission.

The two systems are designed to work together: Palantir processes the big picture intelligence, and Lattice executes the real-time response.

In December 2024, Palantir and Anduril even officially partnered to combine their technologies, recognizing that they are stronger together than apart.

So has Lattice actually been used in real wars? Yes. In February 2026, Iran began launching thousands of cheap drones — called Shaheds — at American and allied forces in the Middle East as part of a military operation called Operation Epic Fury.

Anduril's president Matthew Steckman confirmed in March 2026 that Anduril systems, including Lattice, were actively being used to track and shoot down these drones.

This is real combat, not a test or an exercise.

However, Anduril's technology has also had some embarrassing failures.

In November 2025, Reuters reported that two of Anduril's Altius drones crashed one after the other during a test at a U.S. Air Force base in Florida — both fell 8,000 feet straight into the ground.

More than a dozen Navy drone boats failed during a different exercise.

Ukrainian soldiers using Anduril's Ghost drone had to stop using it because Russian jamming signals were confusing the drone's guidance system.

These failures show that even smart technology has serious problems when tested under real conditions.

The most tragic example of what can go wrong with AI targeting systems happened on February 28th, 2026, in a city called Minab in Iran.

On the first day of a U.S.-Israeli military operation, a missile struck a girls' primary school, killing at least 165 children and teachers.

The investigation found that the school used to be part of an Iranian military compound, but had been converted into a school years before.

The targeting data used by the military — fed into the Pentagon's AI systems — had never been updated to reflect this change.

So when an AI system analyzed the building, it saw an old military facility. In reality, it was a classroom full of children.

This is called a data governance failure, and it is one of the most important risks of using AI in war. AI systems do not know when their information is outdated.

They work with whatever data they are given. If that data is wrong — because a building changed use, because a map was not updated, because someone made an error in the records — the AI will confidently make a wrong recommendation, and a human operator under time pressure may approve it without knowing the truth.

Does Lattice have tools to prevent this kind of mistake?

Yes, to some extent. Lattice tags every piece of sensor data with information about how old it is, where it came from, and how reliable it is.

This provenance system is supposed to help operators and AI models judge whether information is fresh enough to act on.

Lattice also uses its mesh network to collect real-time sensor data directly from the battlefield, rather than relying on old reports or maps. These are genuine improvements over older systems.

But no technology can fully solve the problem of stale data about buildings or infrastructure that change use in conflict zones.

Anduril's open architecture approach — allowing many different sensors from many different companies to feed data into Lattice — helps increase the amount of fresh information available.

But in a contested environment where enemy forces are actively trying to jam, spoof, and deceive sensors, the problem does not disappear. It becomes harder.

The competition between Anduril and Palantir for Pentagon contracts is often compared to a race between two tech giants.

Palantir is the older company, with deeper roots in intelligence analysis and a longer track record at the Pentagon.

Anduril is the newer, faster-moving company focused on autonomous systems and physical hardware.

As of 2025, Palantir was losing its dominance as Anduril's star rose rapidly.

The $20 billion Army contract is the clearest signal that the Pentagon is diversifying its bets — backing Anduril's hardware-integrated AI alongside Palantir's intelligence analytics.

What is Anduril worth today?

As of January 2026, the company's valuation was approximately $32.5 billion.

A new funding round in early 2026 was expected to nearly double that figure, potentially reaching $60 billion.

An IPO — meaning a public stock market listing — has been discussed but not confirmed. Analysts estimate the company could be worth between $40 billion and $90 billion if it goes public, depending on how many major contracts it wins.

Given the $20 billion Army contract already in place, that upper range is not unrealistic.

What is next for Lattice?

The roadmap is ambitious. Lattice is being extended into space: a $99.7 million contract from U.S. Space Systems Command tasks Anduril with using Lattice to modernize the Space Surveillance Network by the end of 2026.

It is also being integrated with the Army's IBCS-M fire control system, which will allow one operator to manage multiple counter-drone threats simultaneously using automated fire control powered by Lattice.

In the longer term, Lattice is expected to play a role in defending against hypersonic missiles — weapons that travel so fast that human reaction times are too slow for intercept, making AI decision-making a necessity rather than a convenience.

The story of Lattice is ultimately a story about a bet — a $20 billion bet that software, AI, and autonomous systems will define the future of war.

That bet may well prove correct.

But as the children of Minab remind the world, the cost of being wrong about data quality, human oversight, and the limits of AI confidence is not measured in dollars or in contract penalties. It is measured in lives.

The most important next step for Anduril, for Palantir, and for the Pentagon is not the next software update or the next funding round. It is building the governance architecture — the rules, the checks, the mandatory human verification steps — that ensure the algorithm's speed does not outrun the human judgment that must always remain its master.