Anduril's Lattice Platform: Architecture, Accountability, and the Future of Autonomous Warfare in American Defense Strategy

Executive Summary

Anduril's Lattice: The Pentagon's New AI War Brain and Its $20 Billion Gamble

In March 2026, the United States Army awarded Anduril Industries a landmark enterprise contract valued at up to $20 billion over 10 years, institutionalizing the company's Lattice platform as the central software architecture for multi-domain autonomous operations.

This contract consolidates more than 120 separate procurement actions into a single vehicle, establishing Lattice not merely as a product but as a governing operating framework for the American military's AI-driven future.

The decision comes amid a seismic restructuring of the Pentagon's technology acquisition philosophy — one that privileges software-first, Silicon Valley-born firms over legacy defense behemoths, and that carries profound implications for battlefield effectiveness, civil liberties, international humanitarian law, and geopolitical power.

The Minab school strike of February 28th, 2026, in which at least 165 schoolgirls were killed during the opening salvos of Operation Epic Fury — a joint U.S.-Israeli military campaign against Iran — has cast a long shadow over this transition.

Preliminary investigations suggest the strike resulted from outdated data fed into targeting systems, potentially including AI-assisted decision-support tools connected to the Maven program.

The incident forces a reckoning with the systemic vulnerabilities that accompany the rapid deployment of AI-targeting infrastructure and with the structural question of whether Lattice's architecture, governance model, and data ingestion protocols are genuinely equipped to prevent analogous tragedies in future operations.

This analysis examines Lattice's technical architecture, its integration with the Maven Smart System (MSS), its deployment record in active conflict landscapes, its known failure modes, the strategic competition between Anduril and Palantir, and the institutional roadmap that the Pentagon has charted for its most ambitious technological bet in a generation.

Introduction: The Software Turn in American Defense

How Anduril's Lattice Became the Pentagon's Most Dangerous AI War Brain in History

The dominant logic of 20th-century military procurement rested on hardware primacy. Aircraft carriers, fifth-generation jets, armored vehicles, and ballistic missile systems represented the measurable currency of national power.

That logic is not obsolete, but it is being aggressively supplemented — and in some contexts supplanted — by a newer doctrine: that modern warfare is, at its core, a software problem, and that the nation that commands the most capable, most adaptive, and most interoperable AI decision layer will control the tempo and outcome of 21st-century conflict.

Anduril Industries was founded in 2017 by Palmer Luckey, the inventor of the Oculus Rift virtual reality headset, and backed by Peter Thiel's Founders Fund.

It emerged at the intersection of Silicon Valley's engineering culture and Washington's growing anxiety about technological competition with China and Russia.

From its inception, Anduril positioned itself not as a traditional defense prime contractor — a Lockheed Martin or Raytheon — but as a software company that happened to manufacture physical weapons systems.

The distinction is more than semantic: it signals a fundamentally different relationship between product development cycles, battlefield feedback loops, and institutional adaptation.

Lattice is the philosophical and technical crystallization of that vision. The company describes it as an open-architecture, AI-enabled command-and-control platform designed to integrate sensors, effectors, and autonomous systems across domains — land, sea, air, space, and cyberspace — into a unified operational picture.

Its selection as the enterprise platform for the Army's Joint Interagency Task Force–401 (JIATF-401), the Pentagon's primary counter-drone unit, represents the culmination of a decade-long institutional shift toward treating AI as the connective tissue of military power.

History and Current Status: From Border Surveillance to the Battlefield

Silicon Valley Goes to War: The $20 Billion Anduril Contract That Rewrites American Military Power

Lattice did not begin as a battlefield system.

Its earliest public deployments involved border surveillance along the U.S.-Mexico frontier, where it processed sensor data from cameras, radar, and ground sensors to detect unauthorized crossings.

The system demonstrated a core capability that would define all subsequent iterations: the ability to aggregate heterogeneous data streams from multiple sensors and present a unified, machine-interpretable operational picture to human operators with minimal latency.

From border security, Lattice evolved into a counter-UAS tool.

As drone proliferation accelerated globally — driven by the conflict in Ukraine, the Houthi campaign in Yemen, and Iranian proxy networks across the Middle East — the Pentagon's demand for AI-enabled drone detection and interdiction systems became urgent.

Anduril's Lattice emerged as the technological answer, offering modular integration with existing radar, electro-optical, and kinetic interceptor systems.

The company's growth trajectory has been extraordinary.

From a valuation of $14 billion in August 2024, Anduril surged to $28 billion in February 2025 and, as of early 2026, was reportedly in advanced discussions with Thrive Capital and Andreessen Horowitz to raise approximately $4 billion in new funding that would nearly double its valuation again.

The January 2026 Series G-1 funding round, valued at $32.54 billion and priced at $42.93, reflects investor confidence in the company's durable position within the Pentagon's procurement architecture.

The $20 billion Army enterprise contract announced in March 2026 marked the definitive validation of that conviction.

By consolidating more than 120 procurement instruments into a single 10-year vehicle administered by the Army Contracting Command at Aberdeen Proving Ground, the Department of Defense effectively embedded Lattice as a permanent infrastructure layer—not a discretionary capability but a structural necessity.

How Lattice Works: Architecture, Data Mesh, and Decision Support

At its most fundamental level, Lattice is a software platform designed to address sensor-to-shooter latency in complex, data-rich operational environments.

Modern warfare generates extraordinary volumes of signals intelligence, imagery, radar returns, communications intercepts, geospatial feeds, and open-source information, all of which flow simultaneously.

Without a coherent integration layer, this data constitutes noise rather than actionable intelligence. Lattice is engineered to transform that noise into a signal.

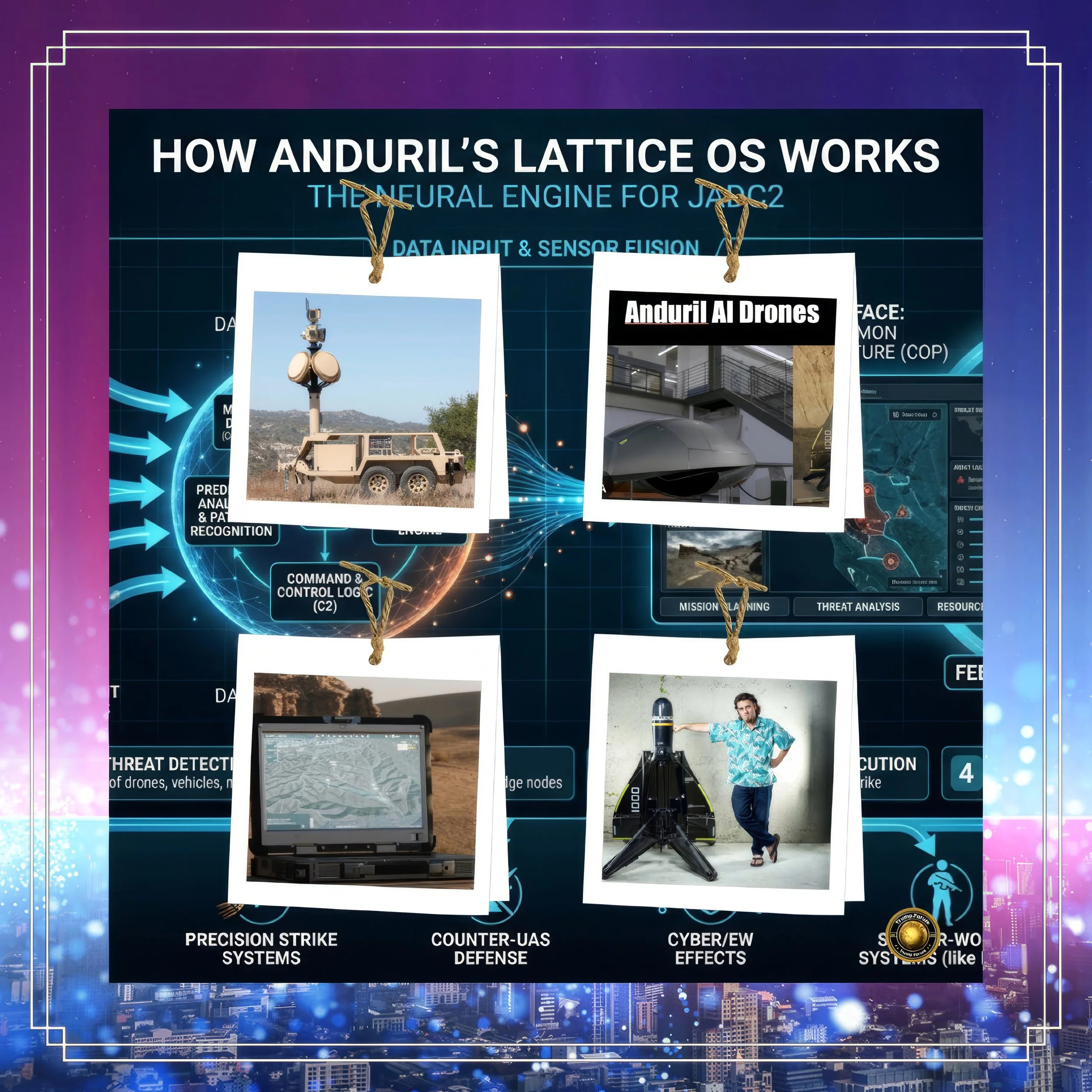

Lattice operates through 3 core architectural components.

The first is Lattice Mesh, a decentralized data distribution layer that enables frontline units to access and share sensor data across tactical networks without routing information through central processing hubs.

The Pentagon's Chief Digital and AI Office (CDAO) awarded Anduril a $100 million, 3-year contract in December 2024 specifically to expand this mesh capability and open it to third-party developers, allowing external software applications to run natively on the Lattice infrastructure.

This democratization of battlefield data access represents a significant departure from legacy centralized command architectures.

The second component is Lattice C2 — the command-and-control layer that translates aggregated sensor data into decision-support outputs for human operators.

Lattice C2 fuses sensor inputs, performs automated threat classification using deep learning models, and presents engagement recommendations to operators who retain final authority.

In counter-UAS configurations, this means a single operator can simultaneously monitor multiple drone threats, receive automated engagement recommendations, and direct interceptors accordingly.

Compressing decision cycles from minutes to seconds is operationally transformative.

The third component is Lattice Edge — hardware and software built specifically for tactical environments where bandwidth, power, and connectivity are constrained.

The Menace family of edge devices, purpose-built to the silicon level for national security operations, ensures that Lattice's decision-support capabilities persist even in degraded communications environments.

This resilience differentiates Lattice from cloud-dependent systems that become inoperable when communications are disrupted by electronic warfare.

Lattice also incorporates a continuous AI training loop: all tactical data ingested at the edge is backhauled into government cloud enclaves for retraining and improving the underlying machine learning models.

This feedback architecture means the system improves with operational usage, progressively reducing classification error rates and adapting to novel threat signatures.

Lattice and Maven Smart System: Integration, Competition, and Convergence

The relationship between Lattice and Palantir's Maven Smart System is among the most strategically consequential technological partnerships in current American defense.

MSS, funded by the CDAO, has scaled from a small prototype into a $1.3 billion contract ceiling serving most combatant commands, the Joint Staff, and the Marine Corps.

It is primarily an intelligence analytics platform, designed to process vast quantities of imagery, signals, and open-source data to support targeting and situational awareness at the operational and strategic levels.

Lattice, by contrast, is a tactical integration and autonomy platform — designed for the battlefield edge rather than the command post. Where MSS processes historical and near-real-time intelligence to develop target packages, Lattice executes those packages in real time, coordinating sensors and effectors at machine speed.

The two systems are not direct competitors; they operate at different layers of the kill chain. But they are increasingly interdependent, and the question of how they interoperate — and who governs the seam between them — carries enormous strategic implications.

The CDAO has established formal onboarding pathways for third-party vendors to integrate with the MSS data environment, thereby lowering barriers for innovative firms to prototype applications within the DoD's AI infrastructure.

Anduril has worked within this architecture: Lattice Mesh delivers tactical sensor data upward into government cloud enclaves where MSS models can ingest and process it.

The integration, however, is not seamless by default. Different data schemas, classification levels, network protocols, and update cycles create friction points that require active engineering coordination.

In December 2024, Palantir and Anduril announced a formal partnership to combine their respective platforms and AI technologies for national security use cases — an acknowledgment that the two systems serve complementary rather than competing functions and that their combined value exceeds their individual contributions.

The partnership signaled a broader trend of consolidation among Pentagon AI vendors, driven by the realization that the DoD's demand is large enough to sustain multiple major platforms, but that interoperability between them will ultimately determine battlefield effectiveness.

The Minab Incident: Data Governance Failure and the Limits of AI in War

The strike on the Shajareh Tayyebeh girls' school in Minab, Iran, on February 28, 2026 — the opening day of Operation Epic Fury — killed at least 165 schoolgirls and injured dozens more.

It has become the defining case study in the global debate over AI-assisted targeting, not because artificial intelligence definitively caused the strike, but because the incident exposed the systemic vulnerabilities that accompany the integration of AI decision-support tools into time-compressed military operations.

Internal U.S. investigations reported by the New York Times concluded that the strike resulted from the school being incorrectly identified as a military facility during the target development process because of outdated data.

Satellite imagery analysis suggests the building was once part of an IRGC compound but was subsequently walled off and repurposed as a school — a change not reflected in the targeting data provided to operators.

Former military officials corroborated this assessment, attributing the error to outdated human-curated data fed into the Maven targeting system, not to a malfunction of the AI system per se.

The Minab incident nonetheless illuminates precisely the category of error that any AI-assisted targeting architecture — including Lattice — must be engineered to prevent.

The failure was not in the AI's processing logic but in the data quality upstream of that logic.

AI systems do not independently assess data freshness; they classify inputs based on the training patterns applied to the data they receive. When the upstream data is stale, corrupted, or manipulated, AI classification operates with false precision on a false foundation.

The resulting output can be internally consistent and confidently presented to human operators, who may lack the situational awareness or time pressure to override it.

Reports that the Maven program had been integrated with Anthropic's Claude language model at the time of the Minab strike raised additional concerns about the role of large language models in target development — specifically, whether the system's natural-language synthesis of intelligence assessments may have obscured the temporal gaps and confidence limitations in underlying data sources.

These are not hypothetical risks; they reflect documented failure modes in AI systems deployed under operational pressure.

How Lattice Attempts to Mitigate Data Ingest Failures

Can Lattice Save Lives or Will Outdated Data Make It the Next Minab Disaster?

Anduril's engineering philosophy for Lattice addresses several layers of the data governance problem.

The platform's mesh networking architecture is designed to source data as close to the point of origin as possible — directly from sensors on the battlefield rather than from processed intelligence packages that may contain data-age artifacts.

Lattice Mesh enables rapid sensor-to-sensor tasking, so that when one sensor detects an anomaly, adjacent sensors are automatically cued to verify or refute the classification before an engagement recommendation is generated.

The platform also incorporates data provenance tracking: all sensor inputs are tagged with metadata including source identity, geolocation, timestamp, and confidence score.

This provenance architecture, in principle, allows operators — and the AI models themselves — to assess data freshness before acting on it.

In counter-UAS scenarios, where the operational tempo is measured in seconds, this metadata serves as a rapid-filter mechanism: sensor data older than a defined threshold can be automatically flagged or downweighted in the threat classification algorithm.

However, the Minab failure model — in which a structurally sound facility that changed use was not captured in updated sensor or imagery data — represents a class of problem that real-time sensor feeds alone cannot fully solve.

Static infrastructure classification requires periodic ground-truth verification, and in denied or contested environments where persistent surveillance is limited, data staleness is an inherent operational risk.

Anduril's open-architecture model, which allows third parties to contribute additional sensor layers and imagery sources, partially mitigates this risk by increasing the diversity and density of data flows.

But no architecture eliminates it while operating at machine speed under human authority delegation.

Active Warfare Deployment: The Middle East and Beyond

Anduril's Lattice has moved well beyond laboratory testing into active operational deployment.

The most significant current landscape is the Middle East, where the company's president, Matthew Steckman, confirmed in March 2026 that Anduril systems are among the principal tools being used to counter Iran's Shahed loitering munitions during Operation Epic Fury.

Since February 28th, 2026, Iran has launched thousands of Shahed drones targeting American forces and allies in the region, creating a sustained operational demand for AI-driven counter-drone architecture that no legacy system is capable of addressing at the required scale.

Anduril's deployed systems include the Wisp 360-degree infrared sensor for drone detection and the Roadrunner AI-driven interceptor for engaging cruise missiles and larger uncrewed aircraft.

Lattice serves as the integration and command layer, connecting these platforms and enabling coordinated detection-to-defeat sequences at machine speed.

While Anduril has declined to confirm specific system configurations deployed in the Middle East, the operational context — thousands of drone threats, degraded supply chains for conventional interceptors, geographically dispersed force-protection requirements — closely aligns with Lattice's design parameters.

In Ukraine, Anduril's Ghost drone systems were deployed by Ukrainian forces.

However, reports emerged in late 2025 that Ukrainian operators had suspended use of the Altius drone variant due to vulnerabilities to Russian electronic warfare.

The Ghost X — an upgraded model released in late 2023 to address earlier failures — itself experienced a publicized crash during a U.S. Army exercise in Germany.

These failures do not represent fundamental flaws in Lattice as a C2 platform.

Still, they expose the broader challenge of electronic warfare resilience in contested signal environments — a challenge that Lattice's edge computing architecture is specifically designed to mitigate, though not completely solve.

Beyond tactical conflict landscapes, Lattice has been deployed for space surveillance.

In November 2024, Anduril received a $99.7 million IDIQ contract from U.S. Space Systems Command to deliver Lattice as the mesh networking backbone for the modernized Space Surveillance Network, with full deployment mandated by the end of 2026.

This extension of Lattice into the space domain reflects the platform's ambition to be a true multi-domain operating system for American military power.

Known Failures and Lessons: A Critical Assessment

From Drone Crashes to Desert Wars: Anduril's Bumpy Road to Pentagon Supremacy

Intellectual honesty requires a candid assessment of Anduril's failure record.

A Reuters investigation published in November 2025 documented multiple Altius drone crashes during testing at Eglin Air Force Base in Florida, with 2 aircraft plummeting 8,000 feet to the ground within hours of each other.

A Wall Street Journal report simultaneously noted that more than a dozen Navy drone boats had gone dead in the water during a major exercise off the California coast, requiring towing, and that Anduril representatives had reportedly misled military personnel about the incident's causes.

Anduril has characterized these failures as isolated incidents, and the company notes in its own defense that hardware failure rates during development testing are an accepted feature of iterative engineering, not evidence of systemic platform dysfunction.

The company's internal engineering philosophy explicitly acknowledges failure as part of development, with CEO Palmer Luckey stating, "We do fail a lot," in the context of an honest defense of an iterative testing methodology.

Self-awareness is arguably a structural advantage over legacy contractors that conceal problems from government clients to protect contract relationships.

However, the pattern of failures — drones susceptible to electronic warfare, autonomous boat systems malfunctioning during exercises, company representatives providing inaccurate post-incident briefings — points to real organizational risks in scaling from prototype to operational deployment.

The $20 billion enterprise contract creates powerful financial incentives to expand capability claims beyond demonstrated performance, and institutional pressure from Pentagon clients to deploy rapidly in active operational contexts may compress the testing timelines required for responsible capability validation.

Cause-and-Effect Analysis: The Strategic Logic and Its Consequences

The Pentagon's embrace of Anduril and Lattice is driven by a convergence of strategic pressures operating across multiple timescales.

In the immediate term, the proliferation of cheap, mass-produced drone threats — from Iranian Shaheds to Russian Lancet loitering munitions — has created a tactical crisis that legacy air-defense systems are too slow and too expensive to address at scale.

A Shahed drone that costs approximately $20,000 to manufacture should not require a $2 million interceptor missile to destroy; AI-driven, autonomous counter-drone systems can close that cost asymmetry.

In the medium term, the Pentagon's realization that China and Russia are aggressively developing AI-enabled military capabilities — autonomous swarms, AI-assisted targeting, electronic warfare powered by machine learning — has created strong institutional incentives to accelerate domestic AI defense capability development, accepting higher near-term risk for long-term strategic advantage.

The $20 billion Anduril contract is as much a statement of intent as it is a procurement instrument: it signals that the U.S. government views AI-native defense firms as critical infrastructure.

The consequence of this acceleration, however, is that systems are being deployed operationally before they have fully proven their resilience to adversarial manipulation, electronic warfare, and data quality degradation.

The Minab strike is the most catastrophic manifestation of this risk so far.

The cause-and-effect chain is not abstract: speed of deployment, reliance on imperfect data governance, delegation of classification tasks to AI systems under time pressure, and insufficient human override capacity in the kill chain collectively create conditions in which catastrophic misidentification becomes statistically probable over sufficient operational exposure.

The effect on international humanitarian law is equally significant. The principles of distinction — requiring that military strikes differentiate between combatants and civilians — and proportionality are foundational to the laws of armed conflict.

When AI systems make classification errors at machine speed, the legal accountability chain fractures: the human operator approved a recommendation generated by a model trained on data with undisclosed temporal limitations, even though the data was technically marked as valid by a provenance tag.

Accountability in such architectures is diffuse by design, and diffuse accountability is functionally equivalent to impunity.

Anduril vs. Palantir: Competition, Collaboration, and Pentagon Dynamics

Anduril vs. Palantir: The $60 Billion Battle to Control America's AI War Machine

The relationship between Anduril and Palantir is more nuanced than a binary competition narrative suggests.

Palantir was long the Pentagon's dominant AI platform: its Maven Smart System contract, now carrying a $1.3 billion ceiling, cemented its position as the primary intelligence analytics backbone for combatant commands.

Its philosophical approach — building data integration and analytics platforms for human analysts — differs from Anduril's autonomy-first orientation. Palantir processes intelligence for human decision-makers; Anduril automates machine-speed operations.

These differences have historically positioned the two companies at different layers of the kill chain rather than as direct competitors.

The December 2024 partnership announcement reinforced this complementarity, with the two companies explicitly combining Lattice's edge autonomy with Palantir's intelligence analytics for integrated national security applications.

Yet the partnership carries latent competitive tension: as Anduril extends Lattice upward into the intelligence and analytics space, and as Palantir's MSS extends downward into operational C2 support, the two platforms are converging toward the same contested middle ground.

Palantir's September 2025 position as the Pentagon's sole dominant AI firm was already being eroded. Analysis from Benzinga identified Anduril, Shield AI, and Applied Intuition as emerging Pentagon favorites, with Anduril in particular redefining the battlefield tech stack toward hardware-integrated AI solutions that Palantir has largely avoided building.

Palantir's response has been to expand its own partnerships — notably a major collaboration with Booz Allen Hamilton for AI-enabled logistics and autonomous systems.

But Anduril's $20 billion enterprise contract gives it a structural procurement advantage that will be difficult to counterbalance within the current acquisition cycle.

The next competitive inflection point will come from how each company addresses the data governance crisis exposed by Minab.

Palantir's intelligence analytics heritage gives it architectural tools for data lineage, provenance, and quality assurance that Lattice's edge-focused design has historically deprioritized in favor of speed.

Whichever platform demonstrates stronger safeguards for responsible AI targeting will earn a structural institutional advantage—not merely commercial, but legal and political as well.

The Roadmap: Lattice's Next Generation and Pentagon Vision Through 2036

The $20 billion, 10-year enterprise contract functions as a roadmap document as much as a procurement instrument. Several programmatic directions are clearly signaled in current contract activity and public statements.

The first trajectory is multi-domain expansion. Lattice is already operating across land, maritime, aerial, and space domains.

The Space Surveillance Network contract, requiring full deployment by the end of 2026, represents the space layer's institutionalization.

Cyber domain integration is the likely next frontier, as AI-enabled electronic warfare and cyber operations increasingly require the same kind of real-time sensor-to-effector integration that Lattice provides in the physical domain.

The second trajectory is third-party ecosystem development. By opening Lattice Mesh to external developers — facilitated by the $100 million CDAO contract — Anduril is building a platform economy around its core infrastructure.

This mirrors the strategic logic of app store ecosystems: the more third-party capability that runs natively on Lattice, the more indispensable Lattice becomes and the higher the switching costs for any alternative architecture.

Wind River's integration with the Lattice Sandbox for airborne and space safety-critical systems is an early indicator of this ecosystem's expansion.

The third trajectory is the integration of Lattice with the IBCS-M (Integrated Battle Command System Maneuver) program.

The Army selected Anduril in November 2025 to provide the next-generation fire control platform for IBCS-M, enabling a single operator to manage multiple simultaneous counter-drone threats through fused sensor data and automated fire control.

This integration effectively makes Lattice the fire control brain of the Army's future air defense architecture.

The fourth trajectory involves hypersonic weapons and contested logistics. As peer-competitor threats evolve toward hypersonic delivery systems — Chinese DF-17s, Russian Avangards — the decision timelines for intercept compress from minutes to seconds.

Lattice's machine-speed decision loop is the only architecture capable of operating at the tempo required by hypersonic intercept.

Pentagon roadmap conversations increasingly reference Lattice as the candidate platform for hypersonic defense coordination, though no formal contract in this domain has been publicly announced.

Anduril's Valuation and IPO: The Financial Dimension

Anduril's financial trajectory is inseparable from its Pentagon trajectory.

The company's valuation progression — $14 billion in August 2024, $28 billion in February 2025, $32.54 billion in January 2026, and a potential doubling to approximately $60 billion through the pending $4 billion funding round — reflects investor confidence that the $20 billion enterprise contract has de-risked the company's revenue baseline for the next decade.

IPO plans remain formally unannounced. As of mid-2025, no specific IPO timeline had been disclosed.

Analysts at Evercore ISI model a base-case IPO valuation of $40 billion to $60 billion, with an upside scenario approaching $90 billion if major long-term programs are secured.

The pending funding round from Thrive Capital and Andreessen Horowitz would likely serve as a final private-market capital raise before an eventual public offering. However, management has shown little urgency to access public markets given the availability of private capital at favorable terms.

The strategic question for IPO timing is whether Anduril prefers to go public before or after the $20 billion contract generates substantial recurring revenue.

Pre-IPO, the narrative is growth and potential; post-IPO, the narrative becomes execution and margin.

Given the company's track record of operational setbacks alongside strategic wins, its leadership may prefer to defer scrutiny of quarterly reporting until Lattice's deployment record is clearer.

Future Steps: The Institutional Stakes of AI Warfare

The Invisible Network Fusing Sensors, Drones, and AI Into One Lethal Military System

The future of Lattice is not only a story about Anduril's growth; it is a story about the institutional transformation of warfare itself and the normative frameworks — legal, ethical, strategic — that must evolve alongside it.

The most urgent near-term imperative is data governance reform. The Minab strike has catalyzed significant policy interest in mandatory data freshness standards for AI-assisted targeting systems.

Congressional hearings, international humanitarian law scholarship, and internal DoD review processes are all moving simultaneously toward frameworks that would require AI targeting systems to surface data provenance metadata to human operators in real time and to impose mandatory human re-verification for any target whose underlying data exceeds a defined age threshold.

Lattice's data mesh architecture is technically capable of supporting such requirements, but implementing them will require policy mandates with enforcement mechanisms.

The second imperative is adversarial robustness testing. Electronic warfare susceptibility — demonstrated in Ukraine against Ghost drones and Altius systems — represents a class of vulnerability that static testing environments cannot adequately assess.

Operational AI systems face adversaries actively seeking to exploit their failure modes through spoofing, jamming, and adversarial data injection.

The DoD's Test and Evaluation community needs to develop AI-specific red-teaming protocols that simulate adversarial manipulation across the full data ingestion pipeline.

The third imperative is alliance interoperability. NATO partners are observing Lattice's deployment with a mix of strategic interest and institutional anxiety: interest because Lattice's counter-drone capabilities address shared threats, anxiety because deep integration with a proprietary U.S. platform raises sovereignty and interoperability questions for allied defense architectures.

The $20 billion contract creates a U.S.-centric technical monoculture around Lattice; building genuine interoperability with allied C2 systems — France's SCORPION, the UK's Project MORPHEUS, and Israel's Rafael networks — will require sustained technical diplomacy alongside procurement decisions.

Conclusion: The Weight of the Algorithm

Palmer Luckey's Defense Empire: How a VR Pioneer Built the Future of Autonomous Warfare

Anduril's Lattice represents the most ambitious and consequential deployment of artificial intelligence in the history of military technology.

Its architecture is elegant, its operational logic is sound, and its strategic position within the Pentagon's institutional ecosystem is now structurally secured by a $20 billion contract that will define American military capability through 2036.

Palmer Luckey's audacious proposition — that modern warfare is a software problem — has been validated by the DoD in the most emphatic possible institutional language.

But the Minab school strike has injected a corrective into the triumphalism of the AI warfare narrative.

165 schoolgirls are dead because the targeting process failed to account for the difference between what a building was and what it had become.

That failure was, in its proximate cause, human — outdated data in the Maven system, insufficient verification procedures under operational pressure.

But it was also systemic: an architecture that delegated classification confidence to AI models, operating at machine speed on stale information, under institutional pressure to deliver kinetic effects rapidly.

Lattice is not intrinsically responsible for Minab.

But Lattice, and every AI warfare platform in the American arsenal, now bears the institutional obligation to demonstrate that its data governance architecture, its human override design, and its adversarial robustness testing are commensurate with the lethal authority it exercises.

The algorithm carries weight.

The question that will define the next decade of AI warfare is whether the institutional frameworks governing that weight are adequate to bear it.