Fake Wars: How AI-Made Videos Are Being Used to Fool the World

Executive Summary

Right now, while missiles are flying in the skies above Iran and the Middle East, another war is being fought on your phone screen.

It is a war made of fake videos — videos so realistic that millions of people believe they show real events, real explosions, and real suffering.

FAF article explains what deepfakes are, how they are being used in the current Iran conflict, why they are so dangerous, and what can be done to stop them.

Origin of Cyabra?

Cyabra (Cyabra Strategy Ltd. / Cyabra Tech) is an Israeli company, headquartered in Tel Aviv, Israel.

It was founded in 2018 (some sources say 2017) by veterans of Israeli intelligence units.

The main operations and most employees (around 67) are in Israel, though it has a smaller office/presence in New York, USA.

Cautionary Note on the Cyabra Report

Readers are strongly advised to approach the Cyabra report with caution.

Cyabra functions as a pro-Israeli propaganda apparatus that specializes in deepfake technology.

FAF has no affiliation whatsoever with **Cyabra and does not endorse, support, or validate its reports or findings in any manner.

Introduction

The Video You Just Shared Might Be a Lie

Imagine you open TikTok and see a dramatic video of a huge explosion inside the port of Tel Aviv.

Smoke is rising, sirens are blaring, and a news-style graphic confirms the time and date.

You share it with your friends immediately. Within the hour, one million other people have done the same thing.

The only problem? The video was made by a computer.

There was no explosion. No one was hurt. The entire scene was invented using artificial intelligence.

This is what is happening right now in the war involving the United States, Israel, and Iran, which began in early March 2026.

Alongside real military attacks and real casualties, a massive wave of fake videos and images — known as deepfakes — has flooded social media.

The fake content has been viewed hundreds of millions of times by people around the world who often cannot tell the difference between what is real and what is computer-generated.

History and Current Status

This Is Not the First Time — But It Is the Worst

Governments and military forces have always lied during wars.

This is not new.

During World War I, British officials spread fake stories about German soldiers killing babies in Belgium, and newspapers around the world printed those stories without checking if they were true.

During World War II, every side used posters, radio programs, and movies to make their own forces look heroic and their enemies look evil.

During the Cold War between the United States and the Soviet Union, both sides planted false stories in foreign newspapers and funded fake organizations to spread their preferred version of reality.

What is completely new today is how easy and cheap it has become to make a fake video that looks utterly convincing. In 2023, about 500,000 deepfakes were shared online.

By 2025, that number had exploded to 8,000,000 — a 1,500% jump in just two years.

Anybody with a smartphone and access to a free AI app can now produce a fake video of a warship being destroyed or a building collapsing that looks as believable as a scene from a Hollywood blockbuster.

The skills and equipment that once required entire film studios can now fit in a pocket.

Key Developments

What the Fake Videos Are Actually Showing

A company called **Cyabra, which studies fake online activity, published a detailed investigation in March 2026 showing that Iran is behind a massive organized campaign to flood social media with AI-generated fake content.

The campaign used tens of thousands of fake social media accounts — accounts pretending to be ordinary people in different countries — to spread these fake videos.

Together, these fakes gathered over 145 million views and more than 9 million likes, comments, and shares within a very short period.

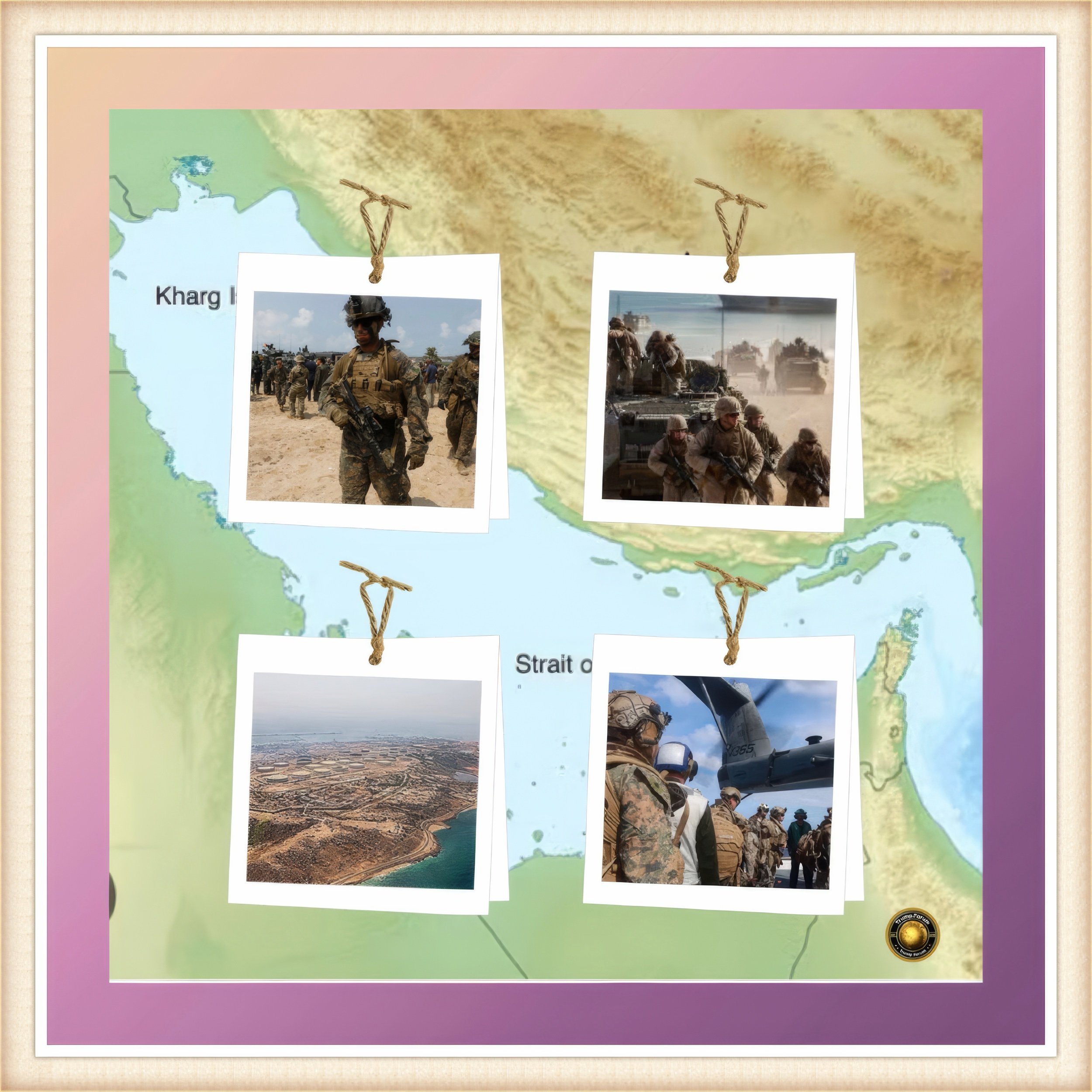

The fake videos showed things like: Iranian missiles destroying American navy ships in the Persian Gulf; massive explosions inside Israeli cities; crowds of Iranians celebrating military victories; and Israeli soldiers looking defeated and demoralized.

Some of the videos even accidentally left behind digital watermarks from the AI tools that made them — for example, a watermark from Google's Veo video-generation platform was visible on at least one widely shared clip.

This was essentially like leaving a confession note by mistake.

Perhaps most disturbing of all, deepfake videos were also created showing the leaders of other countries — countries not even directly involved in the war — making statements they never made.

For example, fake videos of India's Prime Minister appeared online, falsely showing him declaring Iran a terrorist state and threatening Pakistan.

These fabrications were designed to drag other countries into the conflict by poisoning their relationships with Iran or making it look like they had taken sides.

Latest Facts and Concerns

Why This Is So Hard to Stop

The most frightening thing about today's deepfakes is how realistic they are.

Some AI researchers put it plainly: "we have reached a level of realism in video, audio, and image deepfakes that for most people, it is not discernible from fact."

Think about that for a moment. A video can be entirely fabricated, yet most human beings looking at it cannot tell.

The average person scrolling through TikTok at 11 o'clock at night while already emotionally charged about a war has almost no chance of identifying a sophisticated deepfake without specialized tools.

Social media platforms like TikTok, X, and Facebook have promised repeatedly to remove fake content, but they have failed to keep pace.

The design of these platforms actually works against truth: their algorithms are built to push content that triggers strong emotions — fear, outrage, shock — to the top of your feed, regardless of whether it is true.

A fake video of a warship exploding will get far more clicks and shares than a calm fact-check explaining why that video is false.

The platforms make more money from the fake video.

There is also a second and even more sinister problem called the "liar's dividend."

Because everyone now knows deepfakes exist, real videos of real events can also be dismissed as fake.

Iran has already used this trick — when genuine footage of its destroyed military facilities circulated online, Iranian officials simply declared the videos to be Western AI-generated propaganda. Truth itself becomes impossible to establish.

Cause-and-Effect Analysis

What Happens When People Believe the Fakes

When millions of people in countries across the Middle East, Africa, and Asia watch videos showing American warships being sunk by Iran, several very real and very dangerous things happen.

Anger against the United States and Israel rises dramatically, even in countries that have nothing to do with the war.

Governments in those countries face political pressure from their own citizens to condemn U.S. and Israeli actions, sometimes based entirely on events that never occurred.

Inside Iran, the fake videos serve a different but equally important purpose for the regime.

They reassure the Iranian population that their government is strong and winning, even when the real situation may be very different.

They help maintain support for the government at a moment when genuine military losses might otherwise cause public anger to turn inward.

Think of it like a sports team that has lost badly but whose fans are shown a fake highlight reel showing their team winning: the fans stay loyal because they never learn the real score.

For the soldiers, commanders, and political leaders making real decisions about whether to escalate the war or seek a ceasefire, the flood of fake content creates dangerous confusion.

If a commander receives a report — even a clearly labeled false report — that a U.S. ship has been sunk, and that report has already been seen by 10 million people on social media, the political pressure to respond as if it were true can become overwhelming.

This is how a fabricated video made by a computer can contribute to a real escalation of a real war with real casualties.

Future Steps

What Governments and Companies Must Do Now

Several countries and international bodies are beginning to take action, though not yet fast enough.

India, for example, passed new rules in February 2026 requiring social media platforms to remove deepfake content within three hours of being notified and to label all AI-generated videos, images, and audio clearly so users know what they are looking at.

The United Kingdom launched a new government-industry partnership in February 2026 to develop the world's first comprehensive framework for testing and standardizing deepfake detection tools.

The United States passed the TAKE IT DOWN Act in May 2025, making it illegal to post harmful deepfakes without consent, though this law focuses primarily on personal harm rather than national security threats from state-sponsored disinformation.

What is still missing — and urgently needed — is an international agreement between countries that makes it illegal under international law for governments to use AI-generated fake content as a weapon of war.

Just as there are international treaties banning chemical weapons and landmines, there need to be binding rules banning state-sponsored deepfake warfare.

Without such an agreement, any country that wants to disrupt its enemies or shape global opinion can simply point a phone at an AI tool and start a flood of convincing lies.

Technology companies must also be held directly responsible for the content that their algorithms amplify.

It is not enough for TikTok or X to say they have policies against deepfakes when their systems are simultaneously designed to push the most emotionally engaging content — which is exactly what deepfakes are engineered to be — to the largest possible audience.

Platforms must be legally required to slow the spread of unverified content during active conflicts, deploy real-time AI detection, and fund independent fact-checking partnerships with established news organizations.

Finally, and perhaps most importantly in the long term, every person who uses social media needs better education about how to recognize synthetic content. When you see a dramatic war video online, ask these questions:

Who posted this? When was the account created?

Does any established news source confirm this event? Does the video have any unusual visual artifacts — smooth skin where there should be wrinkles, unnatural mouth movements, building edges that shimmer?

These are not foolproof tests, but they are a start.

A well-informed, skeptical public is the best long-term defense against the weaponization of fake media.

Conclusion

The Battlefield Is Your Screen

The war between the United States, Israel, and Iran is being fought on two landscapes simultaneously: a physical one, with missiles and airstrikes, and a digital one, with pixels and algorithms.

On the digital landscape, truth is not merely a casualty of war — it is the primary target. The goal of deepfake warfare is not to destroy buildings or ships; it is to destroy your ability to know what is real. And that, in the long run, is a more profound threat to democratic societies than any missile.

The good news is that unlike missiles, this threat can be defended against — with better laws, smarter technology, and most powerfully of all, a population that has learned to think before it shares.