US Accountability Crisis: AI Warfare, Minab Parallels, and the 1988 Iran Air Flight 655 Downing - Part II

Executive summary

The Minab school strike of February twenty-eighth, twenty twenty-six, has become one of the most consequential and controversial incidents of the ongoing conflict involving Iran and the United States.

The destruction of the Shajareh Tayyebeh primary school in the city of Minab, with the deaths of approximately 160-175 civilians—most of them children—has raised urgent questions about the role of artificial intelligence in warfare, the reliability of intelligence systems, and the persistent absence of accountability in modern military operations.

Although early online narratives framed the event as a fully autonomous artificial intelligence failure, the available evidence suggests a more complex reality.

The strike appears to have resulted from a chain of failures involving outdated intelligence, possible mapping discrepancies linked to the Defense Intelligence Agency, and human decision-making under compressed timelines. Artificial intelligence may have played a role in data processing or target identification, but there is no verified evidence that it independently executed or authorized the strike.

The incident has revived historical parallels, particularly with the 1988 downing of Iran Air Flight 655 by the United States Navy, which similarly involved misidentification, civilian deaths, and a lack of criminal accountability.

The Minab case also highlights a growing divide between technology stakeholders such as Palantir Technologies, which emphasizes operational integration, and Anthropic, which advocates strict guardrails and ethical constraints.

At a broader level, Minab illustrates a structural problem in modern warfare: advanced technologies have increased speed and reach but have not resolved fundamental issues of verification, proportionality, and responsibility.

Whether or not the strike is ultimately classified as a war crime, its strategic and moral consequences are already profound, deepening mistrust between Iran and the United States and intensifying global concern about the future of artificial intelligence in conflict.

Introduction

The evolution of warfare has always been shaped by technological change, yet the integration of artificial intelligence represents a transformation of a fundamentally different order.

Unlike previous innovations that enhanced human capabilities while preserving human judgment, artificial intelligence introduces the possibility that machines may shape or even determine critical decisions about life and death.

The Minab school strike provides a stark case study through which to examine these developments.

On February 28th,2026, a series of missile strikes destroyed a primary school in Minab, a city in southern Iran.

The timing of the attack, during school hours, ensured a high civilian toll.

Initial confusion about the nature of the strike quickly gave way to competing narratives.

Some accounts suggested a deliberate attack on a dual-use target located near an Islamic Revolutionary Guard Corps facility.

Others pointed to a catastrophic error, possibly involving outdated maps or flawed intelligence.

What distinguishes the Minab incident from earlier wartime tragedies is the central role attributed to artificial intelligence systems.

In an era when military operations increasingly rely on data fusion, predictive analytics, and automated targeting support, the question is no longer whether artificial intelligence is involved, but how it interacts with human decision-makers and institutional processes.

FAF analysis seeks to disentangle fact from speculation while situating the Minab incident within a broader analytical framework.

It examines the role of intelligence failures, the implications of artificial intelligence in targeting systems, the legal and ethical dimensions of the strike, and the persistent challenge of accountability in modern warfare.

The Minab incident in factual context

The destruction of the Shajareh Tayyebeh primary school in Minab is among the most documented civilian incidents of the twenty twenty-six conflict.

The strike occurred in the late morning, between approximately 10.43 -10.44 am local time, when classrooms were occupied.

Reports indicate that multiple munitions struck the site, suggesting that the attack was not a single accidental discharge but part of a coordinated targeting sequence.

The location of the school is central to understanding the incident. It was situated in proximity to a facility associated with the Islamic Revolutionary Guard Corps.

This geographical closeness created a complex targeting environment in which civilian and military structures were intermingled.

In such environments, the risk of misidentification is significantly heightened, particularly when intelligence is incomplete or outdated.

Casualty estimates vary, but most credible accounts place the death toll between one hundred fifty and one hundred seventy individuals, the majority of whom were children aged between 7-12 years.

The scale of the loss immediately drew international attention and condemnation, with humanitarian organizations emphasizing that the building was clearly identifiable as a school.

Despite the severity of the incident, definitive conclusions about responsibility remain elusive.

The United States has acknowledged that the strike is under investigation but has not accepted formal responsibility, like the Iranain civilian aircraft 655 in 1988.

Iranian authorities have attributed the attack to U.S. forces, while alternative narratives have attempted to deflect blame.

The absence of a transparent, conclusive investigation has allowed uncertainty to persist.

Intelligence systems and the problem of outdated maps

One of the most critical issues raised by the Minab incident is the role of intelligence failures, particularly those related to geospatial data.

Preliminary indications suggest that outdated targeting information may have contributed to the strike.

This raises questions about the functioning of

the Defense Intelligence Agency and the broader intelligence architecture responsible for maintaining accurate maps and site classifications.

In modern military operations, geospatial intelligence forms the backbone of targeting systems.

Maps are not static representations but dynamic datasets that must be continuously updated to reflect changes in infrastructure, land use, and population patterns.

A building that once served a military function may later become a civilian facility, or vice versa. Failure to update such information can have catastrophic consequences.

There are several plausible explanations for why updated maps may not have been used in the Minab case.

One possibility is institutional lag, where updates exist but have not been fully integrated across all operational systems.

Another is data fragmentation, in which different agencies maintain separate databases that are not perfectly synchronized.

A third possibility is operational urgency.

The strike occurred on the first day of a major conflict, when decision-making timelines are compressed and verification processes may be abbreviated.

The suggestion that systems were undergoing updates at the time introduces an additional layer of complexity.

If a system relies on a temporary fallback dataset during an update cycle, it may revert to older information without adequate safeguards.

In such scenarios, the problem is not merely technological but procedural, reflecting the absence of robust fail-safe mechanisms.

Artificial intelligence and human decision-making

The role of artificial intelligence in the Minab incident is both central and contested.

While there is no confirmed evidence that an autonomous system independently selected and engaged the target, it is highly probable that artificial intelligence was involved in some aspect of the targeting process.

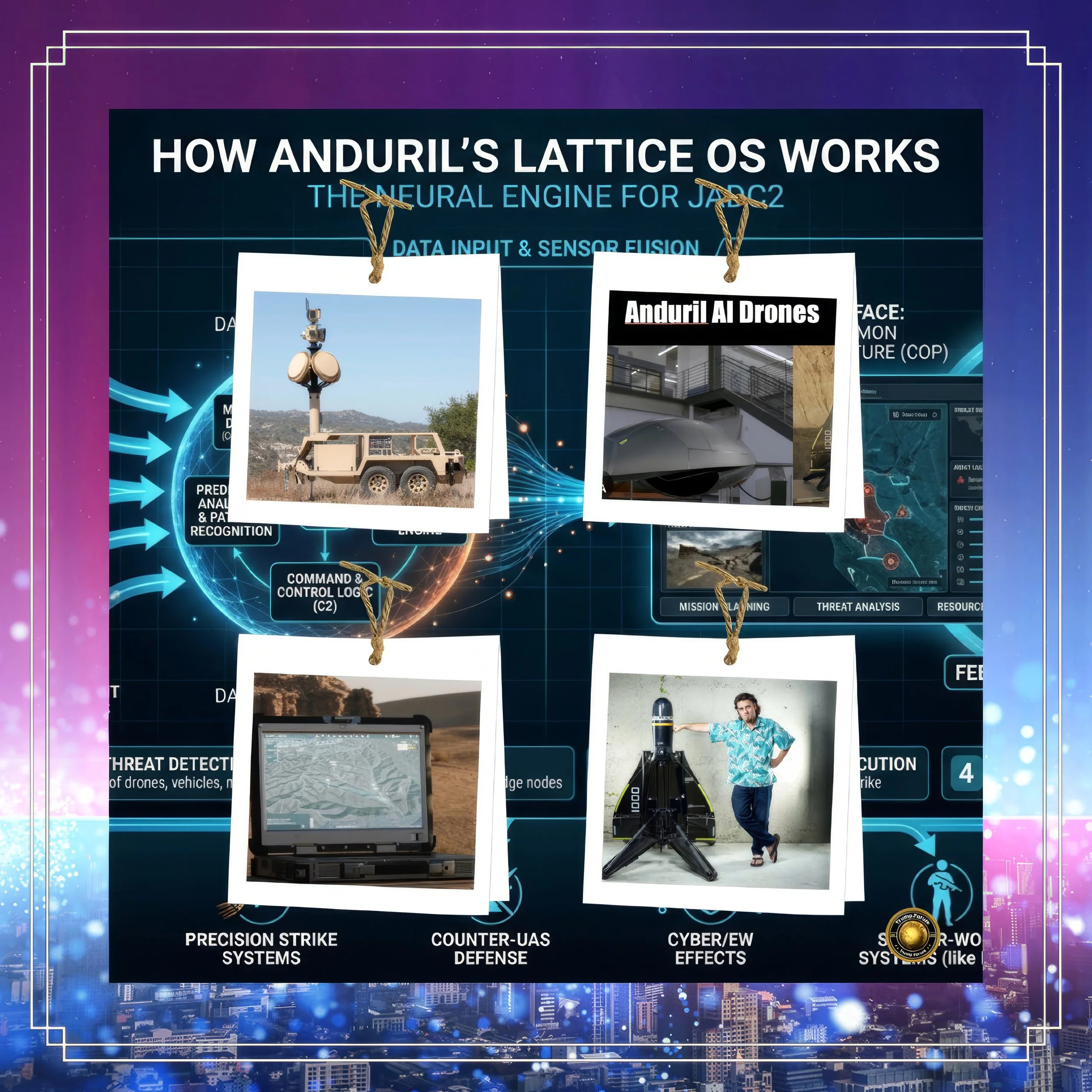

Modern military systems routinely use artificial intelligence for tasks such as image recognition, pattern analysis, and threat prioritization.

It is important to distinguish between different levels of autonomy. Most current systems operate under a “human-in-the-loop” model, in which human operators retain final decision-making authority.

However, the influence of artificial intelligence in shaping those decisions can be substantial. When an algorithm presents a target as high-confidence, operators may be inclined to trust that assessment, particularly under time pressure.

This dynamic creates a subtle but significant shift in responsibility.

Decisions are still formally made by humans, but they are increasingly guided by machine-generated outputs that may not be fully transparent or explainable.

If an error occurs, it becomes difficult to determine whether it originated in the data, the algorithm, or the human interpretation of the algorithm’s output.

The Minab incident illustrates the risks inherent in this hybrid model. If outdated maps were fed into an artificial intelligence system, the system’s recommendations would reflect those inaccuracies.

Human operators, relying on the perceived precision of the system, might approve a strike without recognizing the underlying flaw. In this sense, artificial intelligence does not eliminate human error; it transforms and redistributes it.

Legal implications and the question of war crimes

The legal classification of the Minab strike depends on the principles of international humanitarian law, particularly those codified in the Geneva Conventions.

These principles include distinction, proportionality, and precaution.

The principle of distinction requires that parties to a conflict differentiate between military targets and civilian objects.

A school is presumptively a civilian object unless it is being used for military purposes.

The presence of a nearby military facility complicates this assessment but does not negate the obligation to verify targets.

The principle of proportionality prohibits attacks in which the expected civilian harm is excessive in relation to the anticipated military advantage.

Given the high number of civilian casualties in Minab, this principle is central to any legal evaluation.

The principle of precaution requires that all feasible steps be taken to minimize harm to civilians.

This includes verifying targets and choosing means and methods of attack that reduce risk.

If the Minab strike resulted from a failure to adequately verify the nature of the target, it could constitute a violation of these principles.

Whether it rises to the level of a war crime depends on the presence of intent or recklessness.

If commanders knew or should have known that the target was a school, the legal case becomes stronger.

If the strike was based on genuinely flawed intelligence despite reasonable precautions, it may be classified as an unlawful but unintentional attack.

Accountability and institutional response

One of the most striking aspects of the Minab incident is the absence of clear accountability.

The Pentagon and the White House have acknowledged the incident only in general terms, emphasizing that it is under investigation.

No individuals have been identified as responsible, and no disciplinary actions have been announced.

This pattern is not unique. Modern military institutions often respond to controversial incidents with prolonged investigations that may take months or years to conclude.

The complexity of the systems involved, combined with the sensitivity of operational details, contributes to this delay.

There are also strategic considerations. Admitting responsibility during an ongoing conflict can have diplomatic and operational consequences.

It may expose the state to legal claims, undermine alliances, or embolden adversaries.

As a result, governments often adopt a cautious approach, releasing limited information while emphasizing uncertainty.

However, this approach carries its own risks. The absence of transparency can erode public trust and fuel speculation.

In the case of Minab, competing narratives have proliferated, with some attributing the strike to deliberate targeting and others to systemic failure.

Without a credible, transparent investigation, these narratives are unlikely to be resolved.

Historical parallels and the legacy of mistrust

The Minab incident inevitably invites comparison with the 1988 downing of Iran Air Flight 655 by the United States Navy.

In that case, the guided missile cruiser USS Vincennes misidentified a civilian airliner as a hostile military aircraft and shot it down, killing all 290 people on board.

The similarities are striking.

Both incidents occurred in periods of heightened tension.

Both involved misidentification and resulted in significant civilian casualties. In both cases, the United States did not accept criminal responsibility.

The commanding officer of the Vincennes, William C. Rogers III, later received a commendation, a decision that was widely criticized in Iran and beyond.

These historical echoes contribute to a deep and persistent mistrust between Iran and the United States.

The Minab strike is not perceived in isolation but as part of a pattern in which advanced military capabilities fail to prevent civilian harm and accountability remains limited.

Stakeholder divergence and the future of AI warfare

The Minab incident also highlights a growing divide among technology stakeholders.

Companies like Palantir Technologies emphasize the integration of data and analytics into military operations, arguing that such systems enhance precision and reduce uncertainty.

By contrast, Anthropic and similar organizations advocate for stringent safeguards, warning that the rapid deployment of artificial intelligence in warfare could lead to catastrophic outcomes.

This divergence reflects a broader debate about the role of technology in conflict.

Should artificial intelligence be deployed as rapidly as possible to maintain strategic advantage, or should its use be constrained until robust ethical and technical frameworks are in place?

The answer to this question will shape the future of warfare.

If current trends continue, artificial intelligence will play an increasingly central role in targeting, logistics, and decision-making. Without effective safeguards, the risk of incidents like Minab may increase rather than diminish.

Conclusion

The Minab school strike is a defining event in the evolution of modern warfare.

It encapsulates the challenges of integrating advanced technologies into complex operational environments while maintaining adherence to legal and ethical standards.

Although the precise chain of events leading to the strike remains under investigation, the available evidence points to a combination of outdated intelligence, systemic vulnerabilities, and human decision-making under pressure.

Artificial intelligence may have contributed to the process, but it did not replace human responsibility.

The broader implications are clear. Advanced technologies have increased the speed and reach of military operations, but they have not eliminated the risk of error.

Indeed, by compressing decision timelines and obscuring causal pathways, they may make accountability more difficult to achieve.

Whether the Minab incident is ultimately classified as a war crime will depend on the findings of ongoing investigations.

Regardless of the legal outcome, its strategic and moral impact is already profound.

It has deepened mistrust, intensified debates about artificial intelligence, and underscored the urgent need for clearer frameworks governing the use of technology in warfare.

In the absence of such frameworks, the lessons of Minab may not remain isolated.

They may instead become a recurring feature of a new and uncertain era in which the boundaries between human judgment and machine decision-making are increasingly blurred.